What is AI Readiness

What Is AI Readiness?

An Infrastructure-First Framework for Risk-Aware Organizations

Most organizations are adopting AI faster than they are governing it.

This is not a moral claim or a technological critique. It is an observable structural condition. AI tools are now embedded in productivity software, vendor platforms, and everyday workflows. Their use no longer requires formal deployment, technical expertise, or executive approval.

As a result, AI exposure is increasing even in organizations that believe they have not “adopted AI.”

This creates a new category of risk—one that is not well addressed by existing AI strategy models, ethics frameworks, or data science maturity curves.

That category is AI Readiness.

Defining AI Readiness

AI Readiness is the condition in which an organization’s infrastructure, governance, access controls, logging, vendor exposure, and operational discipline are sufficient to support AI usage without introducing unmanaged risk.

AI readiness is not about innovation speed.

It is not about model selection.

It is not about building AI systems.

It is about whether the organization is structurally prepared for the reality that AI systems are already influencing how data is processed, decisions are supported, and work is performed.

In this sense, AI readiness is a prerequisite condition, not a capability.

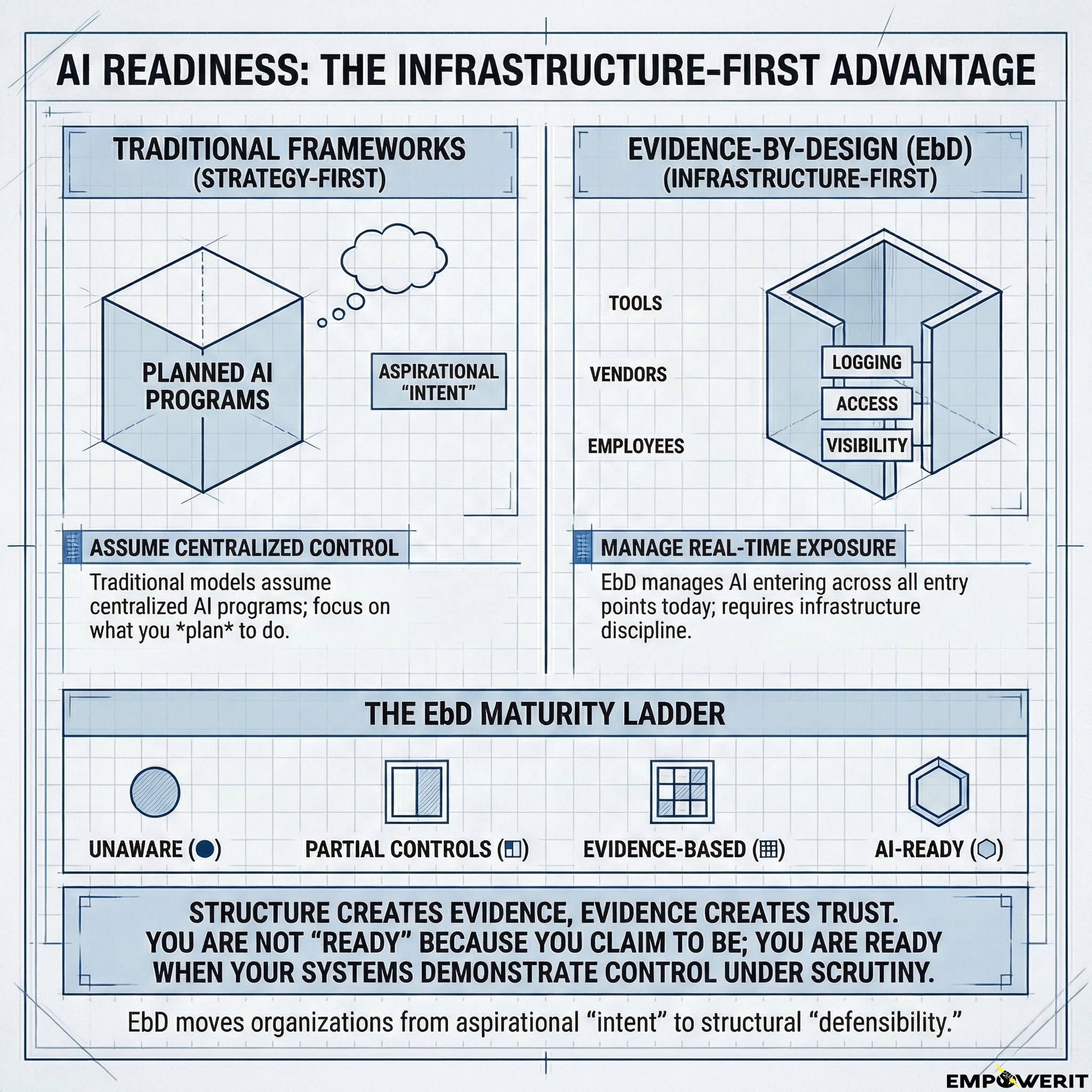

Why Existing AI Maturity Models Fall Short

Most existing AI maturity models focus on one of three domains:

Strategy and culture (leadership intent, AI vision, talent)

Data science and engineering (pipelines, models, MLOps)

Ethics and policy (fairness, explainability, accountability)

These frameworks are valuable—but they assume deliberate, centralized AI programs.

They do not adequately address a more common reality:

AI enters organizations through tools, vendors, and employees long before it enters through formal programs.

AI readiness addresses this gap. It asks a different question:

Is the organization structurally prepared for AI exposure today, regardless of intent?

AI as an Exposure Vector

In 2026, AI should be treated less like a discrete system and more like an exposure vector, similar to:

Cloud services

SaaS platforms

Identity sprawl

Third-party integrations

This reframing matters because exposure vectors are governed through infrastructure discipline, not aspiration.

AI readiness therefore depends on conditions that already matter for resilience, compliance, and insurability, but are often unevenly implemented.

The Evidence-by-Design Alignment

This framework is intentionally aligned with Evidence-by-Design (EbD) principles.

EbD asserts that:

Structure creates evidence

Evidence creates trust

Trust enables defensible decision-making

AI readiness follows the same logic.

An organization is not “AI-ready” because it claims to be careful.

It is AI-ready when its systems can demonstrate control, visibility, and accountability under scrutiny.

This is why readiness must be infrastructure-first.

The AI Readiness Pillars

While implementation details vary by organization, AI readiness consistently depends on a small set of structural pillars:

Identity & Access Control

Clear ownership of accounts, permissions, and AI-enabled tools.Data Boundary Clarity

Understanding what data can be accessed, processed, or shared by AI systems.Logging & Observability

The ability to reconstruct what happened, when, and through which system.Vendor & Tool Exposure Mapping

Visibility into where AI capabilities exist across third-party platforms.Backup, Rollback, and Recovery Discipline

Confidence that AI-mediated actions do not compromise recoverability.Policy & Operational Guardrails

Practical, enforceable guidance on acceptable AI use: not aspirational statements.

These pillars are not new. What is new is the speed and opacity with which AI bypasses weak versions of them.

The AI Readiness Maturity Ladder

To make AI readiness assessable, it must be observable.

The following maturity ladder provides a shared language, aligned with EbD symbology and meaning.

● Unaware

AI usage exists, but is unrecognized or ignored.

There is no visibility into where AI is present or how it is being used.

◧ Partial Controls

Some controls exist (often inherited from IT or security), but they are incomplete or inconsistently applied.

AI exposure is largely unmanaged.

▦ Evidence-Based

Key controls are in place and demonstrable.

AI usage can be explained, reviewed, and defended with evidence.

⬡ AI-Ready

AI exposure is intentional, observable, and governed.

The organization can adopt or restrict AI capabilities without destabilizing its risk posture.

This ladder is not aspirational.

It is diagnostic.

Most organizations today sit between ◧ Partial Controls and ▦ Evidence-Based, often without realizing it.

What AI Readiness Is Not

To avoid confusion, it is important to be explicit.

AI readiness is not:

An AI strategy

A transformation initiative

A technology rollout

A compliance certification

A claim of superiority

It is a condition of preparedness.

Just as an organization can be cloud-ready or audit-ready without pursuing aggressive change, it can be AI-ready without accelerating adoption.

Why AI Readiness Precedes AI Adoption

Adopting AI without readiness does not increase innovation: it increases asymmetry.

Asymmetry between visibility and usage

Asymmetry between responsibility and control

Asymmetry between risk exposure and evidence

Over time, these asymmetries surface during moments of stress:

Insurance renewal

Regulatory inquiry

Vendor incidents

Internal disputes

External audits

AI readiness does not eliminate risk.

It ensures that risk is bounded, explainable, and defensible.

A Closing Note

AI readiness is not a movement slogan or a competitive claim.

It is an emerging infrastructure reality.

As AI systems increasingly influence perception, decision-making, and operational flow, organizations will be judged less on whether they “use AI” and more on whether they can account for it.

Readiness is how that accounting begins.

Next steps

Organizations seeking to understand their current position on the AI readiness ladder should begin with a structured assessment focused on evidence, not intention.

AI adoption can wait.

Structural readiness cannot.